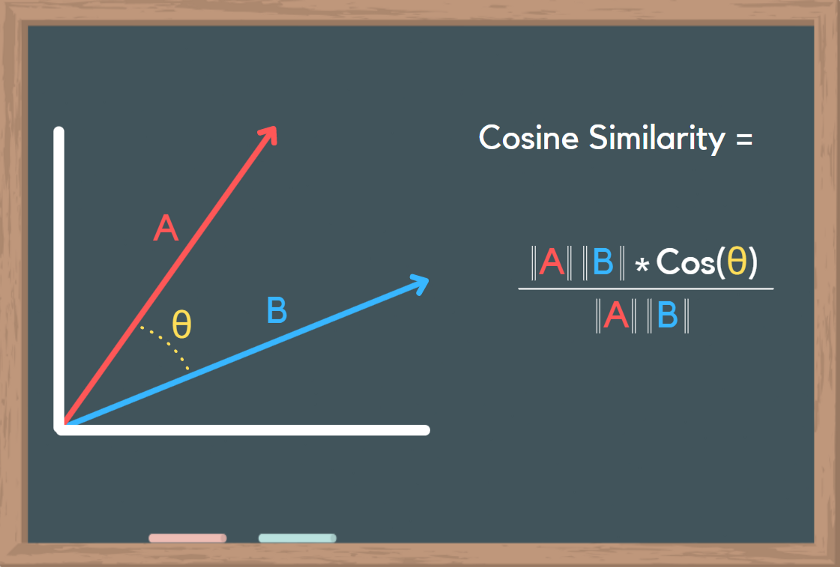

However, in practice I did not like the results as some of the stemming was too much. It’s missing an ‘e’ but you get the idea. For example, I could have Computer, Computing, Computation, and ideally a stemmer would take these words and combine them into one “Comput” word. I initially chose to use a stemmer based upon some suggestions I read that would help reduce the amount of “repeat” words. So I want to condense them as much as possible. There are quite a few words in all these thousands of posts. import pandas as pd import sklearn as sk import numpy as np import re from sklearn.feature_extraction.text import CountVectorizer from sklearn.feature_extraction.text import TfidfVectorizer This analysis will be leveraging Pandas, Numpy, Sklearn to assist in our discovery. All of these are susceptible to the same democratization seen on Reddit, with one caveat, not everyone can downvote. This forum is also a place to “Show HN” what kinds of projects they are working on or “Ask HN” a question that a particular user would like some help answering. This is a forum that is generally geared toward tech, science, and professional discussions based on interesting topics on the internet. Today, we will focus on the forum HackerNews. I’m also happy to cover more topics like this in the future, let me know if you’re interested in learning more about a certain function, method, or concept.But what makes a post interesting? Is there a formula to creating a “front page” post? What do these posts have in common? How can I find similar posts to those that I frequently read? How can I find a specific post that may be related to a topic of my interest? Or if you want to learn more about Natural Language Process you can check out my article on Sentiment Analysis. If you’re interested in learning more about cosine similarity I HIGHLY recommend this youtube video. Hopefully, you can take some of the concepts from these examples and make your own cool projects! As data scientists, we can sometimes forget the importance of math so I believe it’s always good to learn some of the theory and understand how and why our code works. I hope this article has been a good introduction to cosine similarity and a couple of ways you can use it to compare data. Whether you’re trying to build a face detection algorithm or a model that accurately sorts dog images from frog images, cosine similarity is a handy calculation that can really improve your results! It’s also possible to use HSL values instead of RGB or to convert images to black and white and then compare - these could yield better results depending on the type of images you’re working with. Just like with the text example, you can determine what the cutoff is for something to be “similar enough” which makes cosine similarity great for clustering and other sorting methods. 30% is still relatively high but that is likely the result of them both having large dark areas in the top left along with some small color similarities. Just as expected, these two images are significantly less similar than the first two. Here are the two pictures of dogs I’ll be comparing: This process is pretty easy thanks to PIL and Numpy! For this example, I’ll compare two pictures of dogs and then compare a dog with a frog to show the score differences. Luckily we don’t have to do all the NLP stuff, we just need to upload the image and convert it to an array of RGB values. You can probably guess that this process is very similar to the one above. There are also other methods of determining text similarity like Jaccard’s Index which is handy because it doesn’t take duplicate words into account. Cosine Similarity is incredibly useful for analyzing text - as a data scientist, you can choose what % is considered too similar or not similar enough and see how that cutoff affects your results. But, when you have 50% similarity in 1000-word documents you might be dealing with plagiarism. If two 50-word documents are 50% similar it’s likely because half of the words are “the”, “to”, “a”, etc. This process can easily get scaled up for larger documents which should create less room for statistical error in the calculation. These two documents are only 7.6% similar which makes sense because the word “Bee” doesn’t even appear once in the third document.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed